I'm quite interested in this, because here at Refractions I've just finished working on a project which involved computing a TIN for the province of B.C. using 500 million masspoints. After briefly flirting with the idea of computing a single seamless TIN, I ended up computing it in a piecewise fashion. This probably turned out to be a better approach for other reasons (such as the fact that we could easily parallelize the process). However, it would be very cool to be able to compute a seamless TIN for the entire province. And the approach in this paper sounds very effective - they quote a billion triangle in 48 minutes on a laptop!

It's also exciting to see the emergence of a whole new class of algorithms focussed on dealing with VLSD's (very large spatial datasets). As the same authors point out in another paper, paradoxically, advances in computing technology can make geospatial processing more difficult

because the rate of growth of dataset size far outstrips memory capacity.

Incidentally, these guys are a bit of a dream team for computational geometry algorithms. Shewchuck is the developer of Triangle, one of the best known programs for computing constrained 2D Delaunay triangulations (which is also AFAIK one of the few available systems to seriously address robustness issues - JTS of course being another one!) Snoeyink has done research in all kinds of different areas of CG, including interesting work in computing watershed boundaries on TINs using B.C. data.

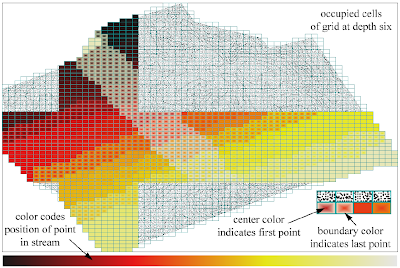

The paper also has a nice way of showing the spatial distribution of two related variables, using fill gradients:

4 comments:

For some related research on very large spatial data sets, see the STREAM project at Duke/NCSU.

Yes, I saw that site as well - and Isenburg et al mention this work quite a few times.

All very interesting. Suddenly a plethora of approaches to choose from!

I think I have seen a "live" presentation from these guys at GIScience conference last year.. very impressing

Post a Comment