But after a bit of thought I realized that there was a simple way to improve the performance of using DoubleDouble. I originally designed the DoubleDouble API to provide value semantics, since this is a 100% safe way of evaluating expressions. However, this requires creating a new object to contain the results of every operation. The alternative is to provide self-modifying methods, which update the value of the object the method is called on. In many arithmetic expressions, it's possible to use self-methods for most operations. This avoids a lot of object instantiation and provides a significant performance benefit (even with Java's ultra-efficient object allocation code).

I added self-method versions of all the basic operations to the DoubleDouble API. While I was at it I threw in operations which took double arguments as well, since this is a common use case and allows avoiding even more allocations (as well as simplifying the code). The self-methods are all prefixed with the word "self", to make them stand out since they are potentially dangerous if used incorrectly. (I suppose a more Java-esque term would be "this-methods", but in this case the Smalltalk argot seems more elegant).

I also decided to rename the class to DD, to improve the readability of the code. Another enhancement might be to shorten the method names to 3 characters (e.g. "mul" instead of "multiply").

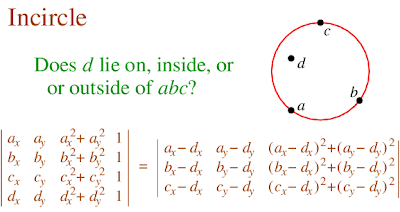

As an example, here's the inCircle code in the original implementation and using the improved API. Note the use of the new DD.sqr(double) function to improve readability as well as performance.

Original code

public static boolean isInCircleDDSlow(

Coordinate a, Coordinate b, Coordinate c,

Coordinate p) {

DD px = DD.valueOf(p.x);

DD py = DD.valueOf(p.y);

DD ax = DD.valueOf(a.x);

DD ay = DD.valueOf(a.y);

DD bx = DD.valueOf(b.x);

DD by = DD.valueOf(b.y);

DD cx = DD.valueOf(c.x);

DD cy = DD.valueOf(c.y);

DD aTerm = (ax.multiply(ax).add(ay.multiply(ay)))

.multiply(triAreaDDSlow(bx, by, cx, cy, px, py));

DD bTerm = (bx.multiply(bx).add(by.multiply(by)))

.multiply(triAreaDDSlow(ax, ay, cx, cy, px, py));

DD cTerm = (cx.multiply(cx).add(cy.multiply(cy)))

.multiply(triAreaDDSlow(ax, ay, bx, by, px, py));

DD pTerm = (px.multiply(px).add(py.multiply(py)))

.multiply(triAreaDDSlow(ax, ay, bx, by, cx, cy));

DD sum = aTerm.subtract(bTerm).add(cTerm).subtract(pTerm);

boolean isInCircle = sum.doubleValue() > 0;

return isInCircle;

}

Improved code

public static boolean isInCircleDDFast(

Coordinate a, Coordinate b, Coordinate c,

Coordinate p) {

DD aTerm = (DD.sqr(a.x).selfAdd(DD.sqr(a.y)))

.selfMultiply(triAreaDDFast(b, c, p));

DD bTerm = (DD.sqr(b.x).selfAdd(DD.sqr(b.y)))

.selfMultiply(triAreaDDFast(a, c, p));

DD cTerm = (DD.sqr(c.x).selfAdd(DD.sqr(c.y)))

.selfMultiply(triAreaDDFast(a, b, p));

DD pTerm = (DD.sqr(p.x).selfAdd(DD.sqr(p.y)))

.selfMultiply(triAreaDDFast(a, b, c));

DD sum = aTerm.selfSubtract(bTerm).selfAdd(cTerm).selfSubtract(pTerm);

boolean isInCircle = sum.doubleValue() > 0;

return isInCircle;

}

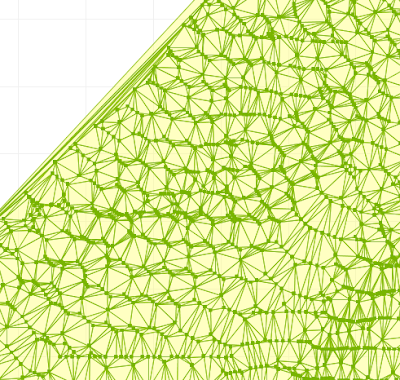

The table below summarizes the performance increase provided by using self-methods for inCircle. The penalty for using DD for improved robustness is now down to only about 1.5x slowdown.

Timings for Delaunay triangulation using inCircle predicate implemented using double-precision (DP), DoubleDouble (DD), and DoubleDouble with in-place operations (DD-self). All times in milliseconds.

| # pts | DP | DD-self | DD |

|---|---|---|---|

| 1,000 | 30 | 47 | 80 |

| 10,000 | 334 | 782 | 984 |

| 10,000 | 7953 | 12578 | 16000 |